From Word Docs to Git: Building a Living SSP with compliance-trestle and AWS Config

There is a document sitting somewhere in your organization right now, a Word file probably named something like SSP_Final_v3_REVIEWED_actualfinal.docx. It describes how your system implements 325 NIST 800-53 Rev 5 controls, and it was accurate the day your 3PAO signed off on it. It has not been accurate since.

This is not a process failure, it is an architectural one. You built your compliance posture as a document instead of as a system, and documents do not know when your S3 buckets change, your CloudTrail gets disabled, or an IAM user loses their MFA at 2am on a Friday. The federal government is slowly figuring this out. FedRAMP 20x and RFC-0024 are pushing for machine-readable authorization packages. The RFC’s comment period closed in March 2026 with no formal outcome yet, but the direction is clear: compliance needs to be code, and your SSP should live there too.

I have known about compliance-trestle for some time. I starred the repo, read the docs, understood the concept but had never actually used it before. I finally sat down, spun trestle up and connected some AWS Config rules, and built a pipeline to test out some automations. This post is what came out of that session, a hands-on walkthrough with a GitHub Actions pipeline that fails the build when critical controls are NON_COMPLIANT.

What I Ended up Building

Here is the architecture of what I wired together:

AWS Config Rules (6 managed rules)

↓

Compliance Findings (COMPLIANT / NON_COMPLIANT)

↓

Python evidence bridge → OSCAL Assessment Results

↓

Trestle workspace → Component Definitions

↓

SSP control narratives inherit from components

↓

NON_COMPLIANT findings route → OSCAL POA&M

↓

GitHub Actions: pipeline fails on critical NON_COMPLIANT findingsThe key insight is that every layer of this stack is already producing structured data. AWS Config returns JSON, OSCAL is JSON, and trestle manages the files and enforces the schema, so there is no reason a human should be manually transcribing compliance findings into a spreadsheet.

Background: What is compliance-trestle?

If you are reading this blog, you may already know what trestle is. But for completeness, I’ll explain at a high level. compliance-trestle is an open-source Python toolchain from the OSCAL Compass project, originally built by IBM and now a CNCF sandbox project. It treats your compliance artifacts the same way Terraform treats your infrastructure, as structured files in a repository, validated by schema, versioned in git, and deployable through a pipeline.

The thing I had not felt until I actually ran it is how trestle tames OSCAL’s complexity. OSCAL documents are rich, nested JSON structures that are technically machine-readable but can be unworkable for humans to edit directly, and trestle splits them into a human-navigable directory structure, enforces schema on every edit, and reassembles them on demand. Your SSP lives in git like any other codebase where control narratives become pull-requestable files and the audit trail is not a separate artifact but the git history itself.

Why AWS Config?

AWS Config is underutilized as a compliance tool. Most teams use it for drift detection and cost governance, but it is also a continuous compliance engine with over 400 managed rules, hundreds of which map directly to NIST 800-53, PCI-DSS, CIS Benchmarks, and FedRAMP controls through conformance packs.

The property that makes Config useful here is that it produces structured, timestamped, attributable findings. A Config rule evaluation tells you exactly which resource was evaluated, what the compliance status was, which rule it ran against, and when, which is essentially the shape of an OSCAL observation. The translation layer between the two is thin.

Here are the six Config rules in this demo and their NIST 800-53 Rev 5 mappings:

| AWS Config Rule | Control | Notes |

|---|---|---|

s3-bucket-server-side-encryption-enabled | SC-28 | Encryption at rest (S3) |

s3-bucket-ssl-requests-only | SC-8 | Transmission confidentiality |

ec2-ebs-encryption-by-default | SC-28 | Encryption at rest (EBS) |

cloudtrail-enabled | AU-2, AU-3 | Audit event generation |

iam-user-mfa-enabled | IA-2(1) | MFA for all IAM users |

dynamodb-pitr-enabled | CP-9 | Point-in-time recovery / backup |

Notice that SC-28 is satisfied by two separate Config rules covering S3 and EBS. A hand-authored SSP usually has a single narrative for SC-28 asserting that the system uses AES-256 encryption. An automated evidence pipeline proves it for each resource class, continuously, with timestamps. That is the difference between asserting compliance and demonstrating it.

The demo also intentionally includes rules that return NON_COMPLIANT: cloudtrail-enabled in an environment with no active trail, dynamodb-pitr-enabled on a table without PITR configured, and s3-bucket-ssl-requests-only on buckets without an explicit deny-HTTP policy. Those findings are the point. The pipeline catches them, routes them to an OSCAL POA&M, and blocks the build.

Step 1: Setting Up Your Trestle Workspace

First, install trestle and initialize a workspace:

pip install compliance-trestle

mkdir trestle-blog && cd trestle-blog

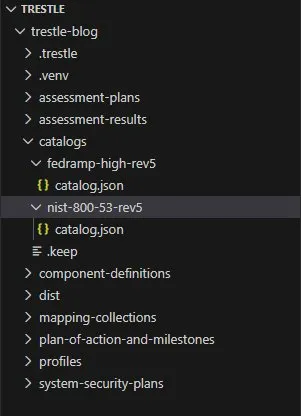

trestle initTrestle creates a structured workspace with directories for each OSCAL artifact type: catalogs, profiles, component-definitions, system-security-plans, assessment-results, and plan-of-action-and-milestones. This directory structure is your compliance repository going forward.

Next, pull in the NIST 800-53 Rev 5 catalog and the FedRAMP High Rev 5 profile:

trestle import -f https://raw.githubusercontent.com/usnistgov/oscal-content/main/nist.gov/SP800-53/rev5/json/NIST_SP-800-53_rev5_catalog.json -o nist-800-53-rev5

trestle import -f https://raw.githubusercontent.com/GSA/fedramp-automation/master/dist/content/rev5/baselines/json/FedRAMP_rev5_HIGH-baseline-resolved-profile_catalog.json -o fedramp-high-rev5

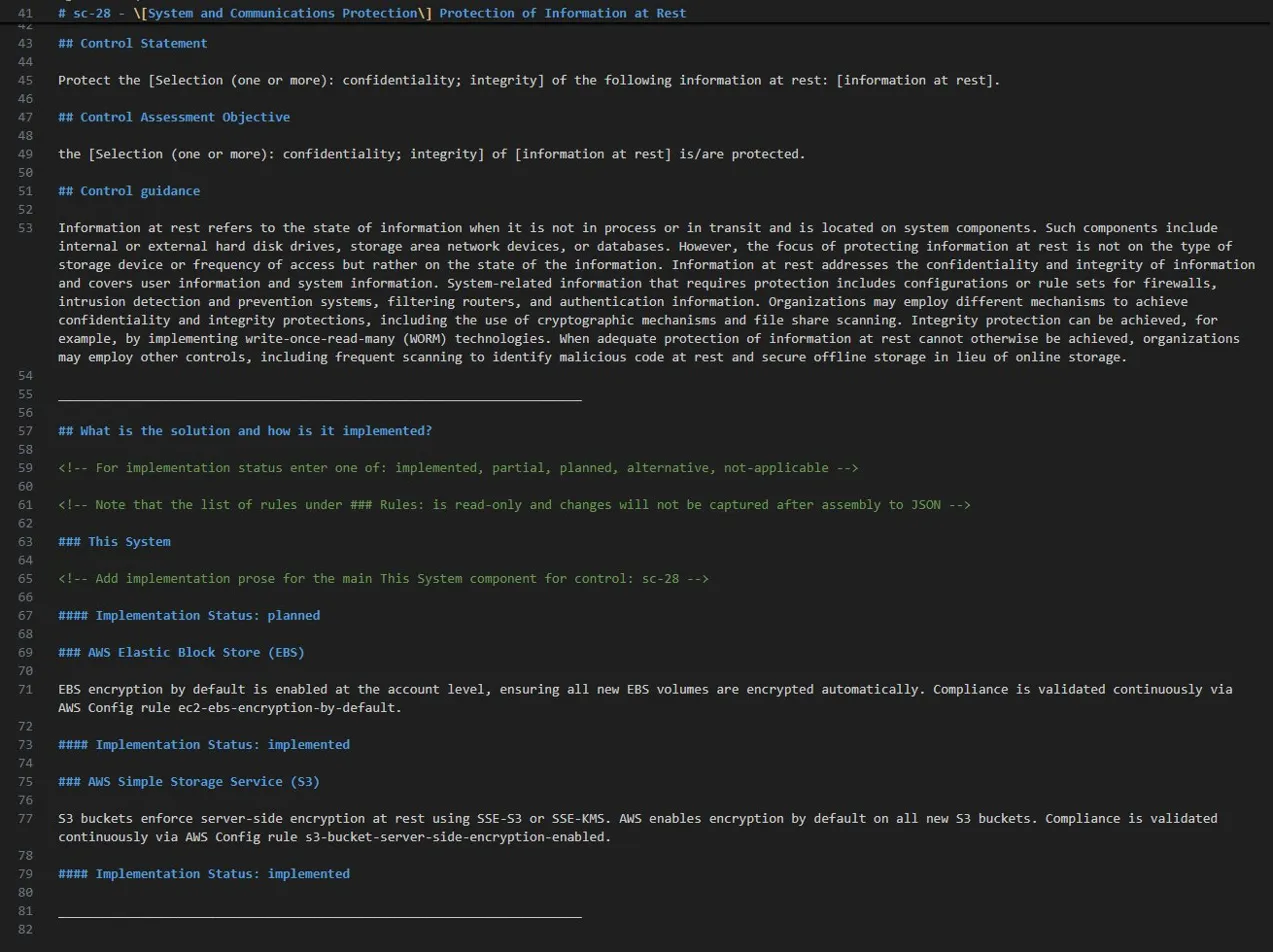

Take a moment with the directory structure here. Each catalog control is its own navigable entry. This is what it looks like when SC-28 is a file you can diff, not a paragraph buried in a 200-page Word document.

A note on the FedRAMP OSCAL baseline: the GSA/fedramp-automation repository, the official home of FedRAMP OSCAL profiles and resolved catalogs, was archived in July 2025 with no announced replacement. The catalog imported above is the last officially published FedRAMP OSCAL High baseline. That gap is worth naming directly. FedRAMP 20x is actively shifting federal authorization toward machine-readable packages and continuous validation pipelines, but the official tooling infrastructure to support that change is still catching up to the vision. Teams building this pipeline now will swap a URL when the new baseline materializes. They will not rebuild from scratch.

Step 2: Building Component Definitions

A component definition in OSCAL describes a technology component and documents which controls it satisfies and how. Think of it as a reusable compliance building block. We are building five, one per AWS service in scope.

trestle create -t component-definition -o aws-s3

trestle create -t component-definition -o aws-ebs

trestle create -t component-definition -o aws-cloudtrail

trestle create -t component-definition -o aws-iam

trestle create -t component-definition -o aws-dynamodbThe key addition in each component definition is the validation-rule property on each implemented requirement. This creates an explicit machine-readable link between the control narrative and the AWS Config rule that validates it:

{

"component-definition": {

"uuid": "d25becbb-4e6a-4118-a017-f22feb680cd6",

"metadata": {

"title": "AWS S3 Component Definition",

"last-modified": "2026-03-17T12:51:12.486645-04:00",

"version": "0.0.1",

"oscal-version": "1.2.1"

},

"components": [

{

"uuid": "ead5f6cc-e373-452a-8626-4f7e9f4764b2",

"type": "service",

"title": "AWS Simple Storage Service (S3)",

"description": "Amazon S3 object storage service deployed within the system boundary.",

"control-implementations": [

{

"uuid": "2150908b-961f-4659-8395-ad0678b37f6a",

"source": "trestle://profiles/fedramp-high-rev5/profile.json",

"description": "FedRAMP High Rev 5 control implementations for AWS S3.",

"implemented-requirements": [

{

"uuid": "05412571-2448-471c-b2d2-98e0cfcbda4c",

"control-id": "sc-28",

"description": "S3 buckets enforce server-side encryption at rest using SSE-S3 or SSE-KMS. AWS enables encryption by default on all new S3 buckets. Compliance is validated continuously via AWS Config rule s3-bucket-server-side-encryption-enabled.",

"props": [

{

"name": "validation-rule",

"ns": "https://aws.amazon.com/config",

"value": "s3-bucket-server-side-encryption-enabled"

},

{

"name": "implementation-status",

"ns": "https://fedramp.gov/ns/oscal",

"value": "implemented"

}

]

},

{

"uuid": "5f5b0bfa-068e-4d14-93d9-68c80b46122c",

"control-id": "sc-8",

"description": "S3 bucket policies enforce HTTPS-only access, denying all HTTP requests. Transmission confidentiality is validated continuously via AWS Config rule s3-bucket-ssl-requests-only.",

"props": [

{

"name": "validation-rule",

"ns": "https://aws.amazon.com/config",

"value": "s3-bucket-ssl-requests-only"

},

{

"name": "implementation-status",

"ns": "https://fedramp.gov/ns/oscal",

"value": "planned"

}

]

}

]

}

]

}

]

}

}For controls that are NON_COMPLIANT in the current environment (CloudTrail, DynamoDB PITR, and S3 SSL enforcement in this demo), implementation-status is set to planned rather than implemented, which is the honest OSCAL representation that the control is defined but not yet fully in place. The evidence bridge routes those to the POA&M automatically.

Step 3: The Evidence Bridge Script

How do you get from AWS Config findings to OSCAL observations without a lot of manual translation work? The answer is a thin Python bridge, since every layer is already producing structured data and the script just handles the translation between them.

CONTROL_RULE_MAP = {

"sc-28": [

"s3-bucket-server-side-encryption-enabled",

"ec2-ebs-encryption-by-default",

],

"sc-8": ["s3-bucket-ssl-requests-only"],

"au-2": ["cloudtrail-enabled"],

"au-3": ["cloudtrail-enabled"],

"ia-2.1": ["iam-user-mfa-enabled"],

"cp-9": ["dynamodb-pitr-enabled"],

}

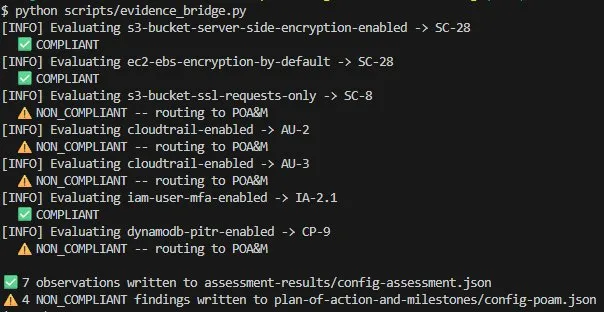

What you are seeing in that terminal output is real evidence collection, not a screenshot pasted into a spreadsheet or a narrative assertion written months ago, but timestamped, structured, machine-readable findings from a live AWS environment transformed into valid OSCAL in under 30 seconds.

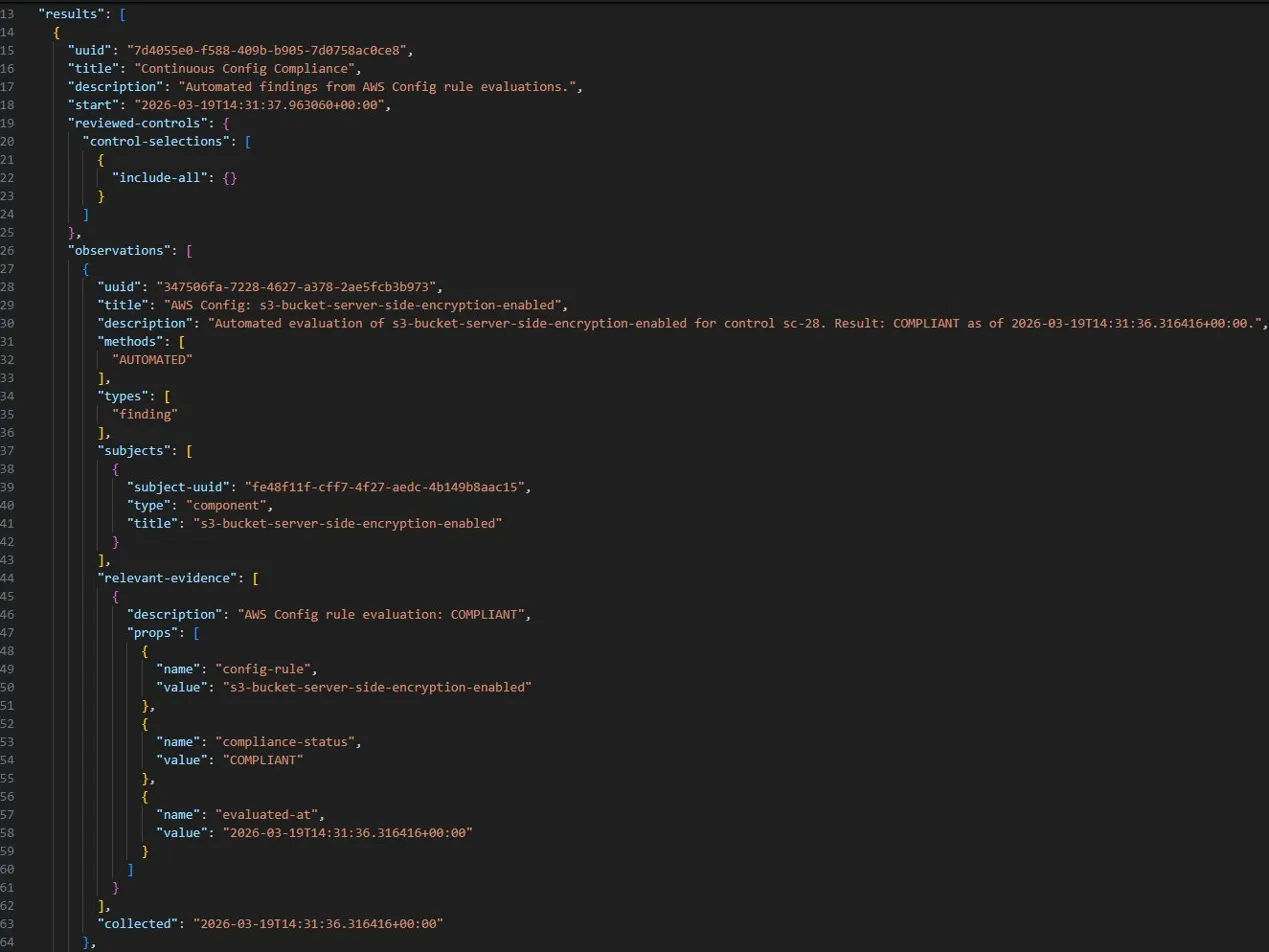

Here is what the generated OSCAL assessment results look like, with each observation structured as a machine-readable finding:

Step 4: POA&M Generation for NON_COMPLIANT Findings

When a Config rule returns NON_COMPLIANT, the evidence bridge routes that finding into an OSCAL POA&M entry automatically. You already know what the Excel version looks like, so here is what the OSCAL version looks like:

{

"plan-of-action-and-milestones": {

"uuid": "f3d8a1c6-9b2e-4d17-a5f0-8c6e3b7d2a91",

"metadata": {

"title": "AWS Config Compliance POA&M",

"last-modified": "2026-03-19T14:31:36.316416+00:00",

"version": "0.0.1",

"oscal-version": "1.2.1"

},

"poam-items": [

{

"uuid": "1a3e5c7e-a0e6-4c10-9e97-baa92c1f4d08",

"title": "NON_COMPLIANT: s3-bucket-ssl-requests-only",

"description": "AWS Config rule s3-bucket-ssl-requests-only returned NON_COMPLIANT. Maps to control sc-8. Requires remediation.",

"related-observations": [

{ "observation-uuid": "7d4b55e0-f588-4b9b-b065-7d0738ac0ce8" }

],

"status": "open"

},

{

"uuid": "3a61c2e4-a0e6-4c10-9e97-daef2c1b7e53",

"title": "NON_COMPLIANT: cloudtrail-enabled",

"description": "AWS Config rule cloudtrail-enabled returned NON_COMPLIANT. Maps to control au-2. Requires remediation.",

"related-observations": [

{ "observation-uuid": "a9790dfa-7228-4627-a17b-3ae5fcb3b973" }

],

"status": "open"

}

]

}

}The OSCAL POA&M is not just a prettier spreadsheet, it is linkable. Each entry references the specific observation UUID from the assessment results, which in turn references the component definition, which references the SSP control, making the entire chain of evidence explicit and machine-traversable.

In this demo the pipeline surfaces four NON_COMPLIANT findings automatically: SC-8 (transmission confidentiality), AU-2 and AU-3 (audit logging), and CP-9 (backup). Three different control families, one pipeline run, zero manual effort.

When your 3PAO asks for your POA&M at the next assessment, you are not emailing them an Excel file. You are giving them a structured document that can be ingested directly into their tooling, which is exactly what RFC-0024 is trying to normalize across the FedRAMP ecosystem.

Step 5: The GitHub Actions Pipeline

Wire the evidence bridge into a GitHub Actions workflow. The pipeline runs on every push and on a daily schedule, pulling fresh Config findings from AWS and failing the build if critical controls are NON_COMPLIANT:

name: Compliance Validation

on:

push:

branches: [main]

pull_request:

paths:

- 'component-definitions/**'

- 'assessment-results/**'

- 'plan-of-action-and-milestones/**'

schedule:

- cron: '0 6 * * *'

jobs:

validate-compliance:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: '3.11'

- name: Install dependencies

run: pip install compliance-trestle boto3

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v4

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: us-east-1

- name: Run evidence bridge

run: python scripts/evidence_bridge.py

- name: Check for critical NON_COMPLIANT findings

run: |

python -c "

import json, sys

with open('plan-of-action-and-milestones/config-poam.json') as f:

poam = json.load(f)

items = poam['plan-of-action-and-milestones']['poam-items']

critical = ['cloudtrail-enabled', 'dynamodb-pitr-enabled']

failures = [i for i in items if any(c in i['title'] for c in critical)]

if failures:

print(f'FAILED: {len(failures)} critical NON_COMPLIANT findings in POA&M')

for f in failures:

print(f' - {f[\"title\"]}')

sys.exit(1)

print('All critical controls COMPLIANT')

"

- name: Validate OSCAL artifacts

run: trestle validate -a

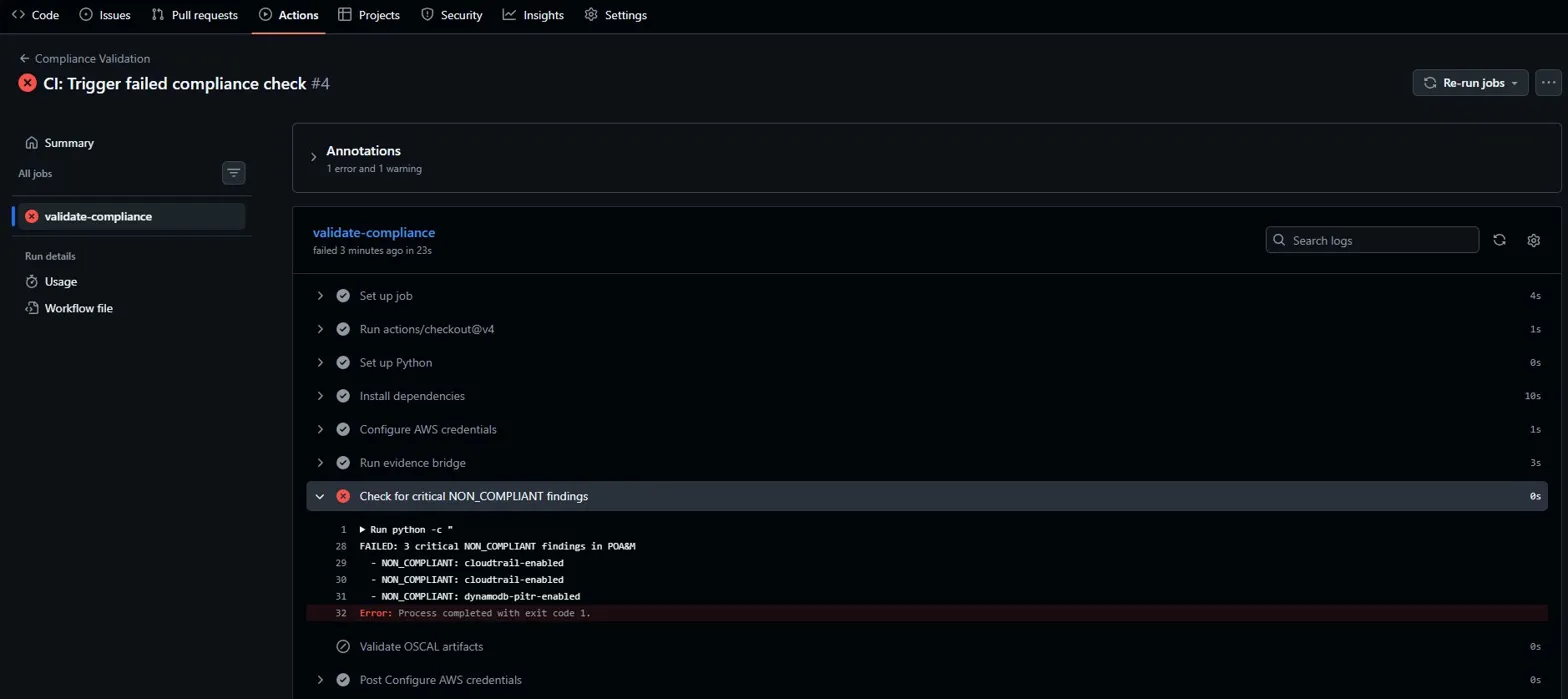

The pipeline blocked the build because CloudTrail is not enabled and DynamoDB PITR is off, leaving AU-2, AU-3, and CP-9 as unmitigated open findings in the POA&M. The build stays red until those controls are remediated and the evidence bridge confirms it on the next run.

The scheduled daily run is the continuous authorization piece. If something drifts, whether CloudTrail gets disabled in a region, a new DynamoDB table is created without PITR, or an IAM user loses their MFA, the pipeline catches it. The authorizing official does not find out at the next annual assessment. They find out the next business day.

Step 6: The Full SSP View

This is where the GRC engineering shift becomes most visible. Assembling the SSP is no longer a writing exercise, it is a composition exercise:

trestle author ssp-generate \

--profile nist-high-rev5 \

--compdefs aws-s3,aws-ebs,aws-cloudtrail,aws-iam,aws-dynamodb \

--output demo-ssp

Trestle generates a markdown file for each control, pre-populated with the implementation text from your component definitions. SC-28 shows two inherited implementations (S3 and EBS), each with a reference to the Config rule validating it, so your control narrative writers just fill in the system-specific context while the component-level evidence is already in place.

This is the agile authoring model: engineers manage component definitions and the evidence pipeline, compliance writers manage control narratives, and both are working in git with peer review and version history.

What This Looks Like to FedRAMP Reviewers

Here is the shift this creates in the authorization conversation.

Today, a FedRAMP package review for SC-28 looks something like this: the reviewer reads a narrative paragraph asserting that the system uses encryption at rest, looks for a supporting screenshot of the S3 console, and marks it reviewed.

With this pipeline, SC-28 looks like this: the reviewer sees an OSCAL component definition with a machine-readable reference to two Config rules, linked to an assessment results document with timestamped COMPLIANT observations for every S3 bucket and EBS volume in the environment, generated hours ago by an automated pipeline. The evidence is not a screenshot taken during a pre-assessment scramble, it is a continuous record.

And the NON_COMPLIANT findings are not buried in an email thread or discovered during the assessment. They are in the POA&M with control IDs, timestamps, and open status, generated by the same pipeline that validated the passing controls, so the authorizing official sees the full picture rather than a curated snapshot.

RFC-0024 is trying to make this version of the review the standard. The vendors who build this pipeline now are not just doing compliance better; they are building the capability that the next generation of federal authorization will require.

What to Build Next

This post covers the core of the pipeline. From here, the natural extensions are:

- Expanding the Config rule library: there are over 400 managed rules. Mapping the full FedRAMP High control baseline to Config rules is a significant project and a reusable artifact the ecosystem badly needs.

- Adding AWS Security Hub: Security Hub aggregates Config findings with findings from Inspector and Macie. The same evidence bridge pattern applies, and you get a much richer observation set across more control families.

- Integrating with a CSPM: tools like Wiz or Prisma Cloud produce structured findings that map to NIST controls. The OSCAL observation model handles any structured finding source.

- Automating SSP updates: when a component definition changes, trigger a pipeline that regenerates the affected SSP sections and opens a PR for review. Full GitOps for your authorization package.

The goal is a system where your authorization package reflects the actual state of your environment, continuously, without a team of people manually keeping it current. FedRAMP 20x is building toward a future where continuous authorization at scale requires exactly this kind of pipeline, and the tools and standards are already converging to make it possible. The only question is whether you build it now or scramble to build it when the mandate lands.